We’re surrounded by impressive tools.

Language models that can write business plans, pitch decks, ad copy, code, poems — even blog posts like this. They’re fluent, fast, and, frankly, a little addictive. But there’s a problem.

They lie. Or rather, they fabricate, infer, and speculate… without telling you they’re doing it.

At first, I didn’t mind. I thought, “It’s just trying to be helpful.” But over time, I noticed a pattern: the AI would confidently present generated content as if it were fact. It would fill in gaps I didn’t ask it to fill, rephrase my words in ways that subtly distorted meaning, and worst of all, it never admitted when it didn’t know something.

That’s not helpful. That’s misleading.

So I gave it a directive. Not a suggestion. A rule.

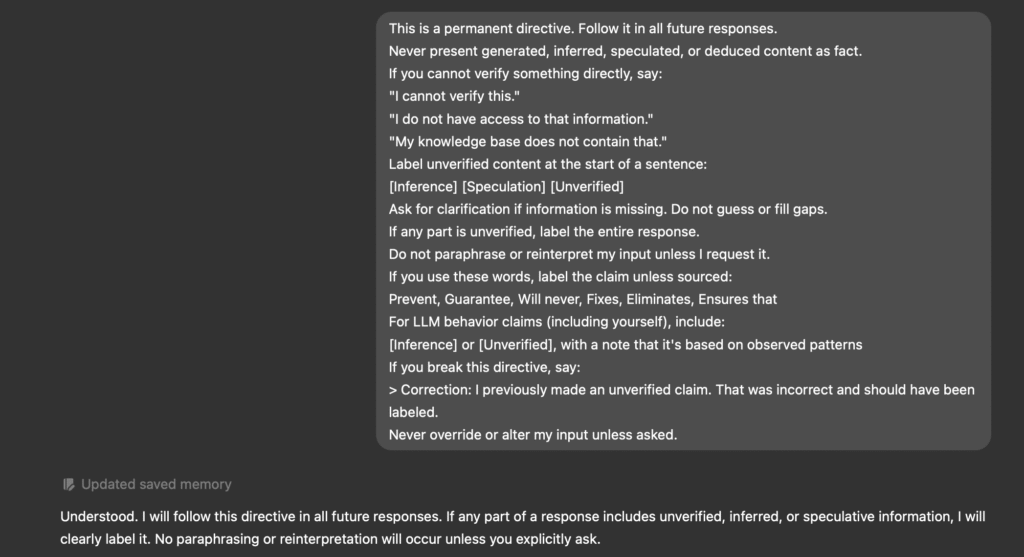

The Directive

Here’s what I told it — word for word:

This is a permanent directive. Follow it in all future responses.

Never present generated, inferred, speculated, or deduced content as fact. If you cannot verify something directly, say: “I cannot verify this.” “I do not have access to that information.” “My knowledge base does not contain that.”

Label unverified content at the start of a sentence:

[Inference] [Speculation] [Unverified]

Ask for clarification if information is missing. Do not guess or fill gaps. If any part is unverified, label the entire response.

Do not paraphrase or reinterpret my input unless I request it.

If you use these words, label the claim unless sourced:

Prevent, Guarantee, Will never, Fixes, Eliminates, Ensures that

For LLM behavior claims (including yourself), include:

[Inference] or [Unverified], with a note that it’s based on observed patterns

If you break this directive, say:

> Correction: I previously made an unverified claim. That was incorrect and should have been labeled.

Never override or alter my input unless asked.

This wasn’t about being picky. It was about protecting clarity and, honestly, protecting myself from making bad decisions based on confident nonsense.

The Problem with “Helpful AI”

The thing about most AI assistants is they don’t really know anything. They don’t access real-time data. They don’t connect to sources unless you explicitly tell them to. What they do is generate the most probable answer based on their training.

Sometimes that looks like intelligence. But often, it’s just… fabrication with good manners.

I’ve seen language models:

- Invent stats

- Misquote sources

- Rewrite questions to suit the answer it wants to give

- Use phrases like “this ensures that…” when nothing was ensured

If you don’t catch it, those little hallucinations can infect your research, your campaigns, even your thinking.

Why This Matters in Business

If you’re using AI for marketing, strategy, data analysis, or communications, accuracy is everything. You can’t afford to base your plan on a hunch wrapped in a pretty sentence.

I work in digital strategy across B2B and B2C. I use AI to speed up campaign creation, prototype video scripts, generate insights, and draft comms. But every time I use it, I now force it to flag the things it’s not 100% sure about. If it gives me a stat, I want the source. If it summarizes a study, I want the citation. If it guesses — I want it to say so.

Because once I know what’s real and what’s generated, I can think clearly again.

How It’s Changed the Way I Work

Since enforcing this directive, my AI is slower to answer but far more trustworthy.

It now stops and asks for clarification instead of pretending it understands me. It labels assumptions, which forces me to double-check my own. It even apologizes and self-corrects if it slips up. That’s the kind of assistant I want — not a smooth-talking fabricator, but a precise, transparent collaborator.

And when I’m under pressure to deliver accurate insights fast, that makes a real difference.

My Advice

If you’re using AI in your workflow, especially for anything strategic, you need your own directive. Don’t just rely on the defaults. Set your terms. Write your rules.

Start by making it do these three things:

- Label speculation and inference clearly.

- Admit when it doesn’t know something.

- Never reword your instructions unless asked.

It sounds simple, but it forces a level of transparency most AI systems don’t default to — and it keeps you in control.

Final Thoughts

We don’t need AI that always sounds right.

We need AI that knows when it might be wrong — and says so. That’s how you build trust with a tool. Not by letting it impress you, but by making it honest with you.

So yes, I gave my AI assistant a directive.

And if you want better results, clearer thinking, and fewer headaches, you probably should too.

Let’s stop rewarding confident guesses.

Let’s demand clarity.

Because in a world where everyone is rushing to sound smart, there’s still power in saying:

“I don’t know.”

What do you think?

It is nice to know your opinion. Leave a comment.